The security and confidentiality of your personal data is very important to us. More information about our Privacy Policy

DNA & Forensic sample management solutions.

Highly configurable software for forensic labs and public health agencies to comply with complex regulations, enhance transparency, and gain efficiency.

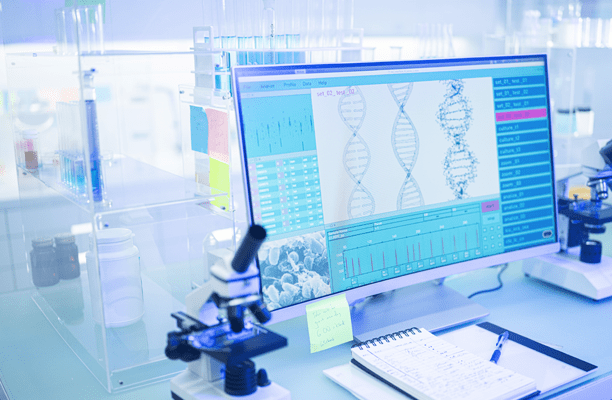

Lab technicians, DNA analysts, and others working in public health and safety must meet stringent regulatory demands and manage heavy workloads.

Our highly configurable software solutions are meticulously crafted with built-in compliance safeguards to meet regulatory demands and align seamlessly with your current operations, ensuring efficient and transparent processing and tracking of DNA and forensic samples. Automating routine tasks and troubleshooting any incidents reduces the risk of errors and contamination, and improves the quality of data, compliance, and outcomes.

Our deep domain expertise in DNA and forensic laboratory processes and proven track record as a trusted partner to some of the largest forensic DNA labs in North America enable us to provide innovative and market-adaptable solutions to meet your specific needs.

Our highly configurable software solutions are meticulously crafted with built-in compliance safeguards to meet regulatory demands and align seamlessly with your current operations, ensuring efficient and transparent processing and tracking of DNA and forensic samples. Automating routine tasks and troubleshooting any incidents reduces the risk of errors and contamination, and improves the quality of data, compliance, and outcomes.

Our deep domain expertise in DNA and forensic laboratory processes and proven track record as a trusted partner to some of the largest forensic DNA labs in North America enable us to provide innovative and market-adaptable solutions to meet your specific needs.

Explore InVita's DNA & Forensic sample management product suite.

43% more samples processed and 30% more cases processed using STACS®Casework.

GRADE A RATING

for “exceptional customer service response” by the State of Texas.

The leading forensic DNA sample tracking and control system in the United States.

150,000 SPECIMENS tracked each year from 200 hospitals across Ontario, Canada.

Sample management solutions to reduce risk and save valuable time.

InVita software is used by forensic DNA labs and law enforcement to fight crime, public health agencies for sexual assault kit tracking and newborn screening programs, and pharmaceutical companies to keep consumers safe from counterfeit drugs.

Our highly scalable solutions help customers meet performance, transparency, and compliance goals.

Our highly scalable solutions help customers meet performance, transparency, and compliance goals.

Integrated system for efficiency and compliance.

InVita’s solutions integrate with one another and with our customers’ existing systems, allowing data to flow seamlessly and securely between labs and agencies, reducing the need for duplicate data entry and increasing end-to-end value.